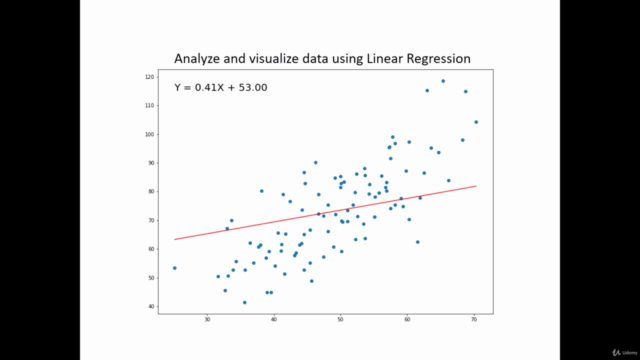

Ggplot(data = simple_lr_data, aes(x = x, y = y)) + # Visualize input data and the best fit line Simple_lr_data$yhat <- simple_lr_predictions Simple_lr_predictions <- sapply(x, simple_lr_predict) # Apply simple_lr_predict() to input data # Define function for generating predictions Finally, input data and predictions are visualized with the ggplot2 package: # Calculate coefficientsī1 <- (sum((x - mean(x)) * (y - mean(y)))) / (sum((x - mean(x))^2)) The predictions can then be obtained by applying the simple_lr_predict() function to the vector X – they should all line on a single straight line. The coefficients for Beta0 and Beta1 are obtained first, and then wrapped into a simple_lr_predict() function that implements the line equation. A simple linear regression can be expressed as: It won’t be the case most of the time, but it can’t hurt to know. If you have a single input variable, you’re dealing with simple linear regression. We’ll ignore most of them for the purpose of this article, as the goal is to show you the general syntax you can copy-paste between the projects. You should be aware of these assumptions every time you’re creating linear models. Rescaled inputs - use scalers or normalizers to make more reliable predictions.If that’s not the case, try using some transforms on your variables to make them more normal-looking Normal distribution - the model will make more reliable predictions if your input and output variables are normally distributed.No collinearity - model will overfit when you have highly correlated input variables.No noise - model assumes that the input and output variables are not noisy - so remove outliers if possible.Linear assumption - model assumes that the relationship between variables is linear.There’s still one thing we should cover before diving into the code – assumptions of a linear regression model: Coefficients are multiplied with corresponding input variables, and in the end, the bias (intercept) term is added. That’s how the linear regression model generates the output. If a coefficient is close to zero, the corresponding feature is considered to be less important than if the coefficient was a large positive or negative value.

You can use a linear regression model to learn which features are important by examining coefficients.

You’ll implement both today – simple linear regression from scratch and multiple linear regression with built-in R functions.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed